Anthropic Accuses Chinese AI Labs of Massive Model Distillation Scheme

By admin | Feb 23, 2026 | 3 min read

Anthropic has leveled allegations against three Chinese AI firms—DeepSeek, Moonshot AI, and MiniMax—for creating over 24,000 fraudulent accounts to interact with its Claude AI model. The companies reportedly engaged in more than 16 million exchanges using a method known as “distillation,” which aimed to enhance their own AI systems. According to Anthropic, these labs specifically focused on extracting Claude’s most advanced features, including agentic reasoning, tool integration, and coding proficiency.

These claims emerge against a backdrop of ongoing discussions about the enforcement of export restrictions on sophisticated AI chips, a measure intended to slow China’s progress in artificial intelligence. While distillation is a legitimate technique often used by AI developers to create more efficient, compact versions of their models, it can also be exploited by rivals to effectively replicate the innovations of others. Earlier this month, OpenAI informed congressional lawmakers that DeepSeek had employed distillation to imitate its products. DeepSeek initially gained attention a year ago by releasing its open-source R1 reasoning model, which delivered performance comparable to leading U.S. labs at significantly lower cost. The company is now preparing to launch DeepSeek V4, its newest model, which is said to surpass both Anthropic’s Claude and OpenAI’s ChatGPT in coding tasks.

The scale and focus of the alleged activities varied among the three firms. Anthropic monitored more than 150,000 exchanges from DeepSeek that appeared designed to refine core logic and alignment, particularly in crafting responses to policy-sensitive queries that avoid censorship. Moonshot AI was linked to over 3.4 million exchanges targeting agentic reasoning, tool use, coding, data analysis, computer-agent development, and computer vision. Last month, the company introduced a new open-source model called Kimi K2.5 along with a coding agent. MiniMax, for its part, was associated with 13 million exchanges concentrated on agentic coding, tool utilization, and workflow orchestration. Anthropic noted that it observed MiniMax redirecting nearly half of its traffic to extract capabilities from the latest Claude model shortly after its release.

In response, Anthropic has committed to strengthening its defenses to make distillation attacks more difficult to carry out and easier to detect. The company is also advocating for a unified effort across the AI industry, cloud service providers, and policymakers to address the issue.

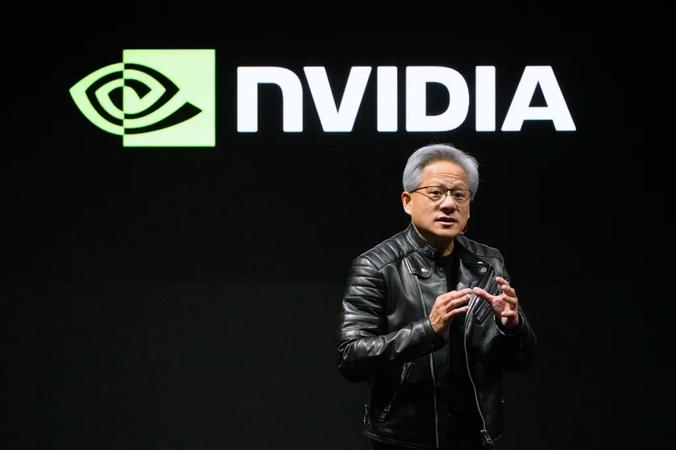

These incidents coincide with continued debate over U.S. chip exports to China. Last month, the Trump administration authorized companies like Nvidia to ship advanced AI chips, such as the H200, to China. Critics contend that relaxing these export controls boosts China’s AI computing power at a pivotal moment in the global competition for AI leadership. Anthropic argues that the extensive extraction efforts undertaken by DeepSeek, MiniMax, and Moonshot would not be possible without access to high-end chips.

In a blog post, Anthropic stated, “Distillation attacks therefore reinforce the rationale for export controls: restricted chip access limits both direct model training and the scale of illicit distillation.” Security expert Alperovitch remarked, “It’s been clear for a while now that part of the reason for the rapid progress of Chinese AI models has been theft via distillation of US frontier models. Now we know this for a fact. This should give us even more compelling reasons to refuse to sell any AI chips to any of these [companies], which would only advantage them further.”

Anthropic further warned that distillation poses not only a threat to American AI supremacy but also potential national security risks. The company’s blog post explained, “Anthropic and other U.S. companies build systems that prevent state and non-state actors from using AI to, for example, develop bioweapons or carry out malicious cyber activities. Models built through illicit distillation are unlikely to retain those safeguards, meaning that dangerous capabilities can proliferate with many protections stripped out entirely.”

The post also highlighted concerns about authoritarian regimes deploying advanced AI for purposes such as offensive cyber operations, disinformation campaigns, and mass surveillance—risks that could be amplified if such models are made open-source.

Comments

Please log in to leave a comment.

No comments yet. Be the first to comment!