Anthropic Launches AI Code Reviewer to Combat Bugs and Security Risks in AI-Generated Software

By admin | Mar 09, 2026 | 3 min read

In software development, receiving feedback from peers is essential for identifying bugs early, ensuring uniformity throughout a codebase, and enhancing the final quality of the software. The emergence of "vibe coding"—utilizing AI tools that translate plain-language instructions into large volumes of generated code—has significantly transformed developer workflows. Although these tools accelerate development, they also bring new challenges, including novel bugs, security vulnerabilities, and code that can be difficult to comprehend.

Anthropic has introduced an AI-powered reviewer aimed at identifying these bugs before they are integrated into a project's codebase. This new product, named Code Review, launched on Monday within Claude Code.

"We’ve seen a lot of growth in Claude Code, especially within the enterprise, and one of the questions that we keep getting from enterprise leaders is: Now that Claude Code is putting up a bunch of pull requests, how do I make sure that those get reviewed in an efficient manner," said Wu. Pull requests are the method developers use to submit code changes for review before they are merged. Wu explained that Claude Code has significantly increased code output, which in turn has created a bottleneck with the rising volume of pull requests needing review. "Code Review is our answer to that," Wu stated.

Anthropic's release of Code Review—initially available in a research preview for Claude for Teams and Claude for Enterprise customers—arrives at a critical juncture for the company. This follows Anthropic filing two lawsuits against the Department of Defense on Monday in response to the agency labeling Anthropic as a supply chain risk. This dispute may lead the company to rely more heavily on its rapidly expanding enterprise business, where subscriptions have quadrupled since the start of the year. According to the company, Claude Code’s run-rate revenue has surpassed $2.5 billion since its launch.

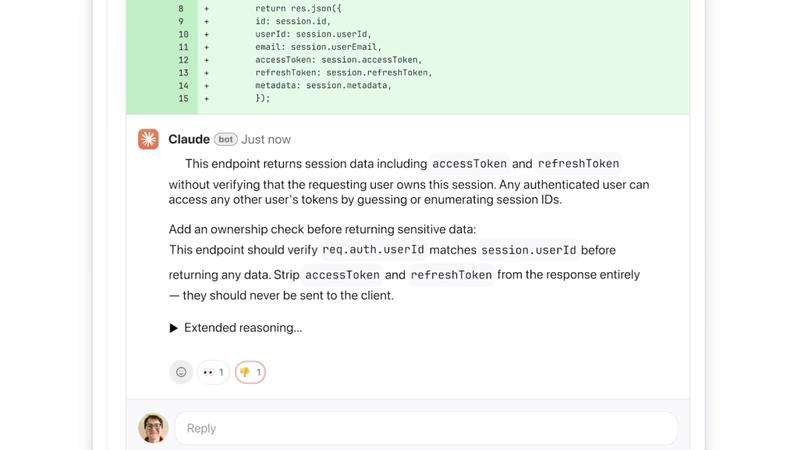

"This product is very much targeted towards our larger scale enterprise users, so companies like Uber, Salesforce, Accenture, who already use Claude Code and now want help with the sheer amount of [pull requests] that it’s helping produce," Wu explained. She added that development leads can enable Code Review by default for every engineer on their team. Once activated, it integrates with GitHub to automatically analyze pull requests, posting comments directly on the code that explain potential issues and suggest fixes. The focus is on correcting logical errors rather than stylistic concerns, Wu emphasized.

"This is really important because a lot of developers have seen AI automated feedback before, and they get annoyed when it’s not immediately actionable," Wu said. "We decided we’re going to focus purely on logic errors. This way we’re catching the highest priority things to fix."

The AI explains its reasoning in a step-by-step manner, detailing the identified issue, why it could be problematic, and potential solutions. The system categorizes issue severity using a color code: red for the most critical problems, yellow for potential issues worth reviewing, and purple for problems related to pre-existing code or historical bugs.

Wu noted the system operates quickly and efficiently by employing multiple AI agents that work in parallel, with each agent examining the code from a different perspective or dimension. A final agent then consolidates and ranks the findings, removes duplicates, and prioritizes the most important items. The tool includes a basic security analysis, and engineering leads can customize it with additional checks based on their team's internal best practices. Wu mentioned that for a more thorough security review, Anthropic offers its more recently launched Claude Code Security product.

This multi-agent architecture does mean the product can be resource-intensive, Wu acknowledged. Similar to other AI services, pricing is based on token usage, with costs varying according to code complexity—though Wu estimated the average cost per review would be between $15 and $25. She described it as a premium experience and a necessary one as AI tools generate ever-increasing amounts of code.

"[Code Review] is something that’s coming from an insane amount of market pull," Wu said. "As engineers develop with Claude Code, they’re seeing the friction to creating a new feature [decrease], and they’re seeing a much higher demand for code review. So we’re hopeful that with this, we’ll enable enterprises to build faster than they ever could before, and with much fewer bugs than they ever had before."

Comments

Please log in to leave a comment.

No comments yet. Be the first to comment!