DeepSeek Launches V4 Flash and V4 Pro: New Mixture-of-Experts AI Models with 1 Million Token Context Windows

By admin | Apr 24, 2026 | 2 min read

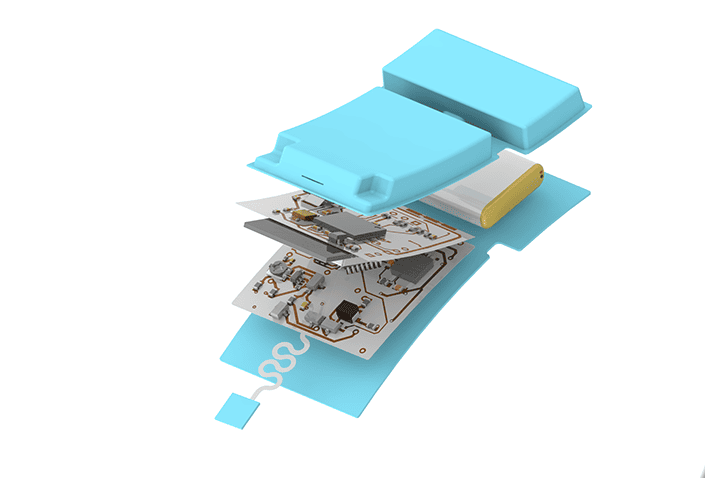

Chinese artificial intelligence lab DeepSeek has introduced two preview versions of its latest large language model, DeepSeek V4—a highly anticipated upgrade to last year’s V3.2 model and the R1 reasoning model that previously captivated the AI community. According to the company, both DeepSeek V4 Flash and V4 Pro are mixture-of-experts models, each featuring a context window of 1 million tokens. This capacity allows users to include extensive codebases or large documents directly in their prompts. The mixture-of-experts architecture works by activating only a specific subset of parameters per task, which helps reduce inference costs.

The Pro version boasts a total of 1.6 trillion parameters, with 49 billion active at any given time. This makes it the largest open-weight model currently available, surpassing Moonshot AI’s Kimi K2.6 (1.1 trillion parameters), MiniMax’s M1 (456 billion), and more than doubling DeepSeek V3.2 (671 billion). The smaller V4 Flash model, on the other hand, contains 284 billion parameters (13 billion active). DeepSeek claims that both models are more efficient and performant than V3.2, thanks to architectural improvements, and have nearly “closed the gap” with leading models—both open and closed—on reasoning benchmarks.

EMBED_PLACEHOLDER_0

The company asserts that its new V4-Pro-Max model surpasses other open-source peers in reasoning benchmarks and even outperforms OpenAI’s GPT-5.2 and Gemini 3.0 Pro on certain tasks. In coding competition benchmarks, DeepSeek states that both V4 models deliver performance “comparable to GPT-5.4.” However, the models appear to lag slightly behind frontier models in knowledge tests, specifically OpenAI’s GPT-5.4 and Google’s latest Gemini 3.1 Pro. This shortfall suggests a “developmental trajectory that trails state-of-the-art frontier models by approximately 3 to 6 months,” the lab noted.

It is worth highlighting that both V4 Flash and V4 Pro support text only, unlike many closed-source competitors, which can also understand and generate audio, video, and images. On a more positive note, DeepSeek V4 is significantly more affordable than any frontier model currently on the market. The smaller V4 Flash model costs $0.14 per million input tokens and $0.28 per million output tokens, undercutting GPT-5.4 Nano, Gemini 3.1 Flash, GPT-5.4 Mini, and Claude Haiku 4.5. The larger V4 Pro model, meanwhile, costs $0.145 per million input tokens and $3.48 per million output tokens, also undercutting Gemini 3.1 Pro, GPT-5.5, Claude Opus 4.7, and GPT-5.4.

EMBED_PLACEHOLDER_1

This launch comes just one day after the U.S. accused China of stealing American AI labs’ intellectual property on an industrial scale, using thousands of proxy accounts. DeepSeek itself has faced accusations from Anthropic and OpenAI of “distilling,” essentially copying, their AI models.

Comments

Please log in to leave a comment.

No comments yet. Be the first to comment!