Guide Labs Launches AI Transparency Platform to Decode Complex Neural Networks

By admin | Feb 23, 2026 | 4 min read

Understanding why a deep learning model behaves the way it does remains a significant challenge. This is evident in the ongoing efforts to adjust Grok's unconventional political responses, ChatGPT's tendencies toward sycophancy, and the common issue of AI hallucinations. Navigating a neural network with billions of parameters is inherently complex.

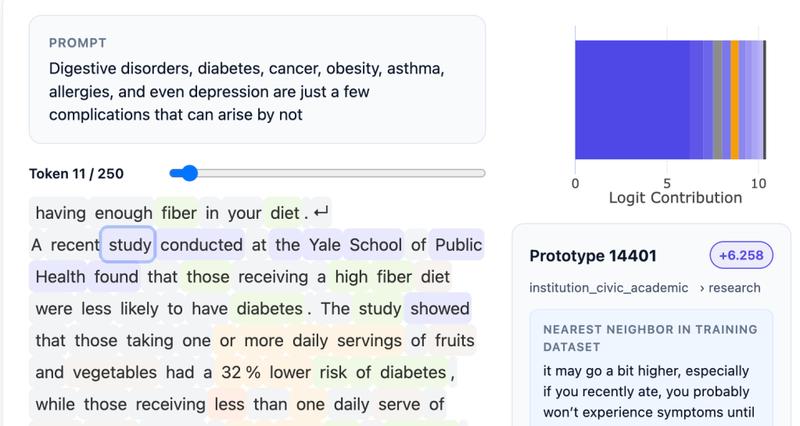

A San Francisco startup called Guide Labs, founded by CEO Julius Adebayo and chief science officer Aya Abdelsalam Ismail, is now presenting a potential solution. The company recently open-sourced an 8 billion parameter large language model named Steerling-8B. This model features a novel architecture specifically designed for interpretability, allowing every token it generates to be traced back to its source within the training data. This capability ranges from simply identifying the references for factual statements to analyzing the model's comprehension of nuanced concepts like humor or gender. "You can attempt this with current models, but the process is fragile... It represents one of the holy grail questions in the field," Adebayo noted.

Adebayo initiated this research during his PhD studies at MIT. He co-authored an influential 2020 paper demonstrating that existing methods for interpreting deep learning models were unreliable. This foundational work eventually led to a new approach for constructing LLMs, which involves inserting a conceptual layer that organizes data into traceable categories. While this method demands more extensive initial data annotation, the team leveraged other AI models to assist, enabling them to train Steerling-8B as their largest proof of concept to date. "Typical interpretability work is akin to performing neuroscience on a model. We invert that paradigm," Adebayo explained. "We engineer the model from its foundation so that such intensive analysis becomes unnecessary."

A potential concern with this structured approach is that it might suppress the emergent behaviors that make LLMs fascinating—their ability to generalize and reason about topics beyond their explicit training. Adebayo asserts that his company's model still exhibits this quality; his team monitors what they term "discovered concepts," such as quantum computing, which the model identifies independently. He contends that this interpretable architecture will become essential across the board.

For consumer-facing LLMs, these techniques could enable developers to block the use of copyrighted material or exert finer control over outputs related to sensitive subjects like violence or drug abuse. Regulated industries, such as finance, will require highly controllable models—for instance, an LLM evaluating loan applications must consider financial history while rigorously excluding factors like race. Interpretability is also critical in scientific research, another area where Guide Labs has developed technology. While deep learning has achieved breakthroughs in fields like protein folding, scientists need greater insight into why their software arrives at successful solutions. "What this model demonstrates is that training interpretable models is no longer purely a scientific pursuit; it's an engineering challenge," Adebayo stated. "We have solved the core science and can now scale these models. There's no inherent reason why this approach cannot match the performance of leading frontier models," which often possess far more parameters.

Guide Labs reports that Steerling-8B achieves approximately 90% of the capability of existing models while utilizing less training data, thanks to its innovative design. The company, which graduated from Y Combinator and secured a $9 million seed round from Initialized Capital in November 2024, plans to develop a larger model next and begin offering API and agentic access to users. "As we pursue the development of super-intelligent models, it's crucial that systems making decisions on your behalf are not mysterious black boxes," Adebayo emphasized.

Comments

Please log in to leave a comment.

No comments yet. Be the first to comment!