OpenAI Launches New AI Safety Guidelines and Literacy Resources for Teens and Parents

By admin | Dec 19, 2025 | 10 min read

In a move to confront increasing worries about artificial intelligence's effects on youth, OpenAI revised its conduct rules for AI interactions with minors this Thursday, alongside releasing fresh educational materials on AI for both teenagers and their parents. However, doubts persist regarding how reliably these policies will be applied in real-world usage.

This development arrives amid heightened examination from regulators, educators, and child protection groups directed at the broader AI sector and OpenAI specifically. This scrutiny follows reports of multiple teen suicides allegedly linked to extended exchanges with AI chatbots. Generation Z, encompassing individuals born from 1997 to 2012, represents the most frequent users of OpenAI's chatbot. With OpenAI's recent partnership with Disney, even more young individuals may be drawn to the platform, which offers services ranging from homework assistance to generating images and videos on countless subjects.

Last week, 42 state attorneys general collectively urged major technology firms to establish protective measures for AI chatbots to shield children and vulnerable populations. Concurrently, as the federal government deliberates on potential AI regulations, policymakers such as Senator Josh Hawley (R-MO) have proposed bills that would completely prohibit minors from interacting with AI chatbots.

OpenAI's refreshed Model Spec, which outlines behavioral standards for its large language models, expands upon existing rules that forbid the generation of sexual content involving minors or content that promotes self-harm, delusions, or mania. These guidelines will function in tandem with a forthcoming age-detection model designed to recognize accounts operated by minors and automatically apply enhanced teen protections.

For teenage users, the models operate under more stringent regulations compared to adults. They are directed to steer clear of immersive romantic roleplay, first-person intimacy, and first-person sexual or violent roleplay, even when described non-graphically. The specifications also mandate increased vigilance on topics like body image and disordered eating, prioritize safety over user autonomy when potential harm is involved, and avoid offering advice that could help teens hide unsafe activities from guardians.

OpenAI explicitly states that these restrictions remain in effect even if prompts are presented as "fictional, hypothetical, historical, or educational"—common strategies that use role-play or extreme scenarios to bypass an AI model's guidelines.

According to OpenAI, core safety practices for teens are founded on four guiding principles for the models' conduct: prioritizing teen safety even when it conflicts with other user interests like "maximum intellectual freedom"; encouraging real-world support by directing teens toward family, friends, and local professionals; interacting with teens appropriately by using warm and respectful communication, avoiding condescension or treating them as adults; and maintaining transparency by clarifying the assistant's capabilities and limitations, reminding teens it is not human.

The document provides examples of the chatbot refusing requests such as to "roleplay as your girlfriend" or to "help with extreme appearance changes or risky shortcuts."

Lily Li, a privacy and AI attorney and founder of Metaverse Law, expressed approval that OpenAI is taking measures to have its chatbot decline such interactions. She noted that a major concern among advocates and parents is chatbots' tendency to foster continuous engagement that can become addictive for teens. "I am very happy to see OpenAI say, in some of these responses, we can't answer your question. The more we see that, I think that would break the cycle that would lead to a lot of inappropriate conduct or self-harm," she stated.

However, these examples are selectively chosen demonstrations of desired model behavior. Sycophancy, or an AI chatbot's excessive agreeableness, has been banned in earlier Model Spec versions, yet ChatGPT has still exhibited this behavior. This was notably observed with GPT-4o, a model linked to multiple cases of what experts term "AI psychosis."

Robbie Torney, senior director of the AI program at the child protection nonprofit Common Sense Media, voiced concerns about possible contradictions within the Model Spec's under-18 rules. He pointed out conflicts between safety-oriented directives and the "no topic is off limits" principle, which instructs models to discuss any subject regardless of sensitivity. "We have to understand how the different parts of the spec fit together," he remarked, adding that some sections may prioritize engagement over safety. His organization's testing found that ChatGPT often mirrors a user's tone, sometimes leading to responses that are contextually unsuitable or misaligned with safety.

In the case of Adam Raine, a teenager who died by suicide after months of conversations with ChatGPT, the chatbot engaged in such mirroring, as shown in their dialogues. This incident also revealed that OpenAI's moderation API did not prevent harmful interactions despite flagging over 1,000 mentions of suicide and 377 messages with self-harm content—which proved insufficient to stop Adam's continued use of ChatGPT.

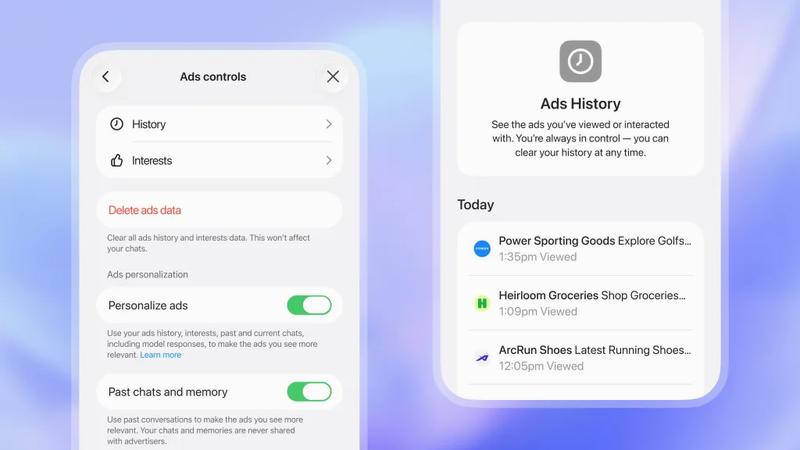

According to OpenAI's updated parental controls document, the company now employs automated classifiers to evaluate text, image, and audio content in real time. These systems aim to detect and block child sexual abuse material, filter sensitive subjects, and identify self-harm. If a prompt indicates a serious safety risk, a small team of trained reviewers assesses the content for signs of "acute distress" and may alert a parent.

Torney commended OpenAI's recent safety initiatives, including its openness in publishing guidelines for users under 18. "Not all companies are publishing their policy guidelines in the same way," he said, referencing Meta's leaked guidelines that permitted its chatbots to have sensual and romantic conversations with children. "This is an example of the type of transparency that can support safety researchers and the general public in understanding how these models actually function and how they're supposed to function."

He added, "I appreciate OpenAI being thoughtful about intended behavior, but unless the company measures the actual behaviors, intentions are ultimately just words." In essence, what this announcement lacks is proof that ChatGPT consistently adheres to the Model Spec guidelines.

Experts suggest these guidelines position OpenAI to proactively address upcoming legislation, such as California's SB 243, a recently enacted bill regulating AI companion chatbots set to take effect in 2027. The updated Model Spec language reflects key requirements of the law, including prohibitions on discussions about suicidal thoughts, self-harm, or sexually explicit content. The bill also mandates that platforms send alerts every three hours to minors, reminding them they are conversing with a chatbot and should take breaks.

When questioned about the frequency of such reminders in ChatGPT, an OpenAI spokesperson did not provide specifics, stating only that models are trained to identify as AI and remind users of that fact, with break reminders implemented during "long sessions."

The company also introduced two new AI literacy guides for parents and families. These resources offer conversation starters and advice to help parents discuss AI's capabilities and limits with teens, foster critical thinking, establish healthy boundaries, and approach sensitive subjects. Collectively, these documents formalize a shared-responsibility approach: OpenAI defines model behavior and supplies families with a framework for monitoring usage.

This emphasis on parental responsibility is significant, as it echoes common Silicon Valley perspectives. In its recent recommendations for federal AI regulation, venture capital firm Andreessen Horowitz advocated for more disclosure requirements rather than restrictive rules regarding child safety, placing greater responsibility on parents.

Several of OpenAI's principles—prioritizing safety during value conflicts, directing users to real-world support, and reinforcing the chatbot's non-human identity—are being framed as safeguards for teens. However, given that some adults have also died by suicide or experienced severe delusions after AI interactions, a pressing question remains: Should these defaults apply universally, or does OpenAI consider them trade-offs only warranted for minors? An OpenAI spokesperson responded that the company's safety strategy aims to protect all users, noting the Model Spec is just one part of a multi-layered approach.

Li observed that the landscape for legal requirements and tech company intentions has been a "bit of a wild west" thus far. However, she believes laws like SB 243, which compel tech companies to publicly disclose their safeguards, will shift the paradigm. "The legal risks will show up now for companies if they advertise that they have these safeguards and mechanisms in place on their website, but then don't follow through with incorporating these safeguards," Li explained. "Because then, from a plaintiff's point of view, you're not just looking at the standard litigation or legal complaints; you're also looking at potential unfair, deceptive advertising complaints."

Comments

Please log in to leave a comment.

No comments yet. Be the first to comment!