AI Memory Costs Surge 7x as Hyperscalers Face Critical Infrastructure Challenge

By admin | Feb 17, 2026 | 2 min read

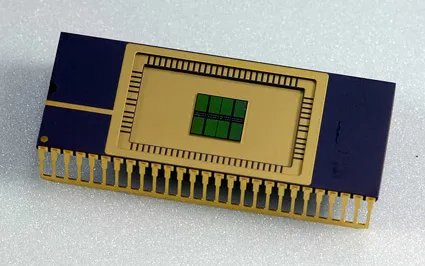

Discussions about AI infrastructure costs often center on Nvidia and GPUs, yet memory is becoming a crucial piece of the puzzle. With hyperscalers investing billions in new data centers, DRAM chip prices have surged approximately sevenfold over the past year. At the same time, there is a growing emphasis on orchestrating memory effectively to ensure data reaches the right agent precisely when needed. Organizations that excel in this area can execute the same queries using fewer tokens, a factor that can determine whether a business survives or fails.

Semiconductor analyst Dan O’Laughlin offers an insightful perspective on the significance of memory chips in his Substack, featuring a conversation with Val Bercovici, chief AI officer at Weka. Both experts come from a semiconductor background, so their discussion leans more toward chips than broader architectural considerations, though the implications for AI software are substantial. One particularly striking passage highlights Bercovici’s observations on the increasing complexity of Anthropic’s prompt-caching documentation.

A telling example is Anthropic’s prompt caching pricing page. Initially, around six or seven months ago—especially during the launch of Claude Code—it was straightforward, essentially advising, “use caching, it’s cheaper.” Now, it has evolved into an extensive guide detailing how many cache writes to pre-purchase. Options include 5-minute tiers, which are industry-standard, or 1-hour tiers, with nothing longer available. This shift is a significant indicator. Additionally, there are various arbitrage opportunities related to cache read pricing based on pre-purchased cache writes.

The core consideration is how long Claude retains prompts in cached memory: users can opt for a 5-minute window or pay more for an hour-long window. Accessing data still in the cache is far cheaper, so effective management can lead to substantial savings. However, there is a catch: introducing new data to a query might displace other information from the cache window. While these mechanics are complex, the conclusion is clear: memory management in AI models will be a pivotal aspect of AI development. Companies that master it are poised to lead the field, and there is ample room for progress in this emerging area.

Last October, I wrote about a startup named TensorMesh, which focuses on cache-optimization as one layer in the stack. Opportunities also exist elsewhere in the architecture. Further down the stack, for instance, data centers are exploring how to utilize different memory types effectively. (The interview touches on when DRAM chips are preferred over HBM, though it delves deeply into hardware specifics.) Higher up the stack, end users are learning to structure their model swarms to leverage shared caches.

As memory orchestration improves, companies will consume fewer tokens, making inference more affordable. Concurrently, models are becoming more efficient at processing each token, driving costs down even further. With server expenses declining, many applications currently deemed unviable will gradually approach profitability.

Comments

Please log in to leave a comment.

No comments yet. Be the first to comment!